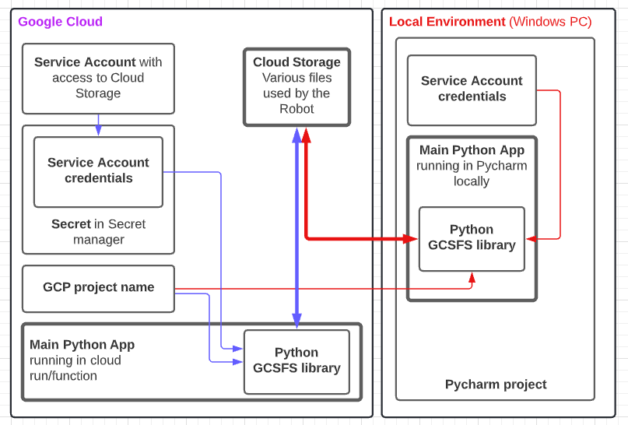

When developing a project I want to be able to run it on my development environment (Windows 10 + Pycharm) and also in production on Google Cloud. Advanced scripts we are creating require additional files to be stored and accessible from anywhere. Google Cloud Storage seems as a reasonable place to store this working files.

Gcsfs library is really simple to use, it looks like you are working with files in your local filesystem rather then in the cloud storage.

Lets do one example, how to use this library. We will need one service account, which has access to cloud storage. The credentials locally for this are stored in the pycharm project folder. On the cloud credentials are stored as a secret and mapped to our cloud run/function instance as a file with json content.

Credentials file looks like (you will get it from google cloud console and store it as secret in secret manager):

{

„type“: „service_account“,

„project_id“: „prod-dswro-cm“,

„private_key_id“: „*“,

„private_key“: „—–BEGIN PRIVATE KEY—–\n very long string here“,

„client_email“: „[email protected]“,

„…

…

…

}

The situation is mapped on the image below:

First, import and init library (if the library is not installed, install it by: pip install gcsfs):

import gcs

google_storage = gcsfs.GCSFileSystem(

project=“my_google_project“,

token=“secrets/my_storage_secret/my_storage_secret“

)

„secrets/my_storage_secret/my_storage_secret“ is common credentials file stored locally / or mounted as secret in cloud.

Lets create a file:

with google_storage.open(

„my_storage_bucket/my_newly_created_file“,

„w“

) as new_file:

new_file.write(„this is the content of the new file\n“)

Thats it. File is created. Lets read it back:

with google_storage.open(

„my_storage_bucket/my_newly_created_file“,

„r“

) as file_to_read:

contents = new_file.read()

print(contents)

Conclusion:

So as you can see, the working with gcsfs library is really easy. I use it exclusively when working with google cloud storage. The manual for the library is located here: https://gcsfs.readthedocs.io/en/latest/

Cheers!